Background

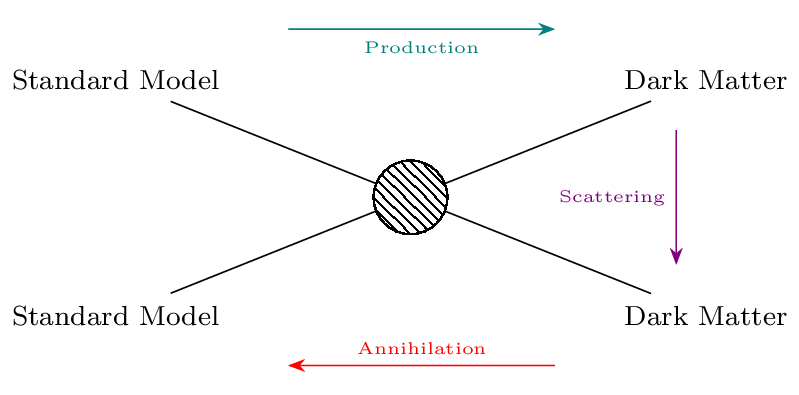

Dark matter makes up ~25% of the universe’s mass and is the key component in structure formation. Our knowledge of dark matter currently comes solely from its gravitational influence, but revealing its particle nature will require identifying its other interaction with itself and standard model particles. Fig 1 shows the three processes that such interactions would allow: production of dark matter through collision of standard model particles, scattering between dark matter and standard model particles, and annilihation of dark matter into standard matter particles. Each of these has an associated detection method: we could produce dark matter in particle colliders (“direct detection”), see recoils of standard model particles due to incident dark matter (“scattering”), or identify standard model particles produced by dark matter annihilations (“indirect detection”). A final possible interaction, included in “indirect detection” but involving only a single dark matter particle, is the spontaneous decay of dark matter into standard model particles.

Cartoon illustrating the ways in which dark matter may interact with Standard Model particles.

Cartoon illustrating the ways in which dark matter may interact with Standard Model particles.

We are concerned here with dark matter annihilation and decay. This is typically sought by identifying astrophysical objects whose kinematics indicate that they are particularly dark matter-rich, which include the Milky Way, dwarf spheroidal galaxies (dSphs) in the Local Group and massive clusters further afield. One then targets these objects with telescopes sensitive to gamma-rays, which the standard model particles produced by dark matter would be expected to decay into. These searches have enabled us to rule out a thermal relic origin for WIMP dark matter at low mass, depending on the annihilation channel. Such methods however have two important disadvantages:

- by focusing on specific objects they necessarily miss gamma-rays coming from the interactions of any dark matter not in the objects,

- they are susceptible to systematic errors due to gamma-rays produced in those objects by non-dark matter, i.e. baryonic, means.

To avoid these potential pitfalls, we instead forward-model the gamma-ray flux from annihilation and decay pixel-by-pixel across the full sky using BORG-based models of the large-scale dark matter distribution. This enables a field-level inference of annihilation and decay rates on comparison with similarly all-sky data from the Fermi Large Area Telescope.1

Predicting annihilation and decay fluxes

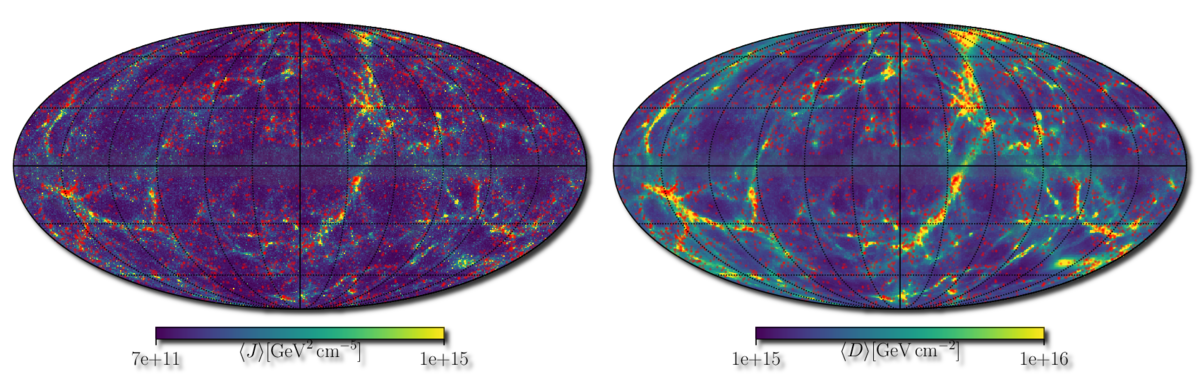

Our method leverages CSiBORG, a suite of 101 high-resolution N-body simulations with initial conditions spanning the posterior of the 2M++ BORG-PM chain. Each box provides a plausible realisation of the dark matter distribution out to ~200 Mpc, including the clumping of dark matter into halos. We use this to make a prediction for the annihilation (decay) flux that would be seen for each line of sight on the sky for a given annihilation cross-section (decay rate) and channel, which we then project onto a Healpix grid to match the resolution of Fermi. This is shown in Fig 2, in terms of the “J-factor” (left) and “D-factor” (right) that describe the astrophysical contributions to the flux (i.e. without the particle physics terms). Assuming a Poisson likelihood for the measured flux in each pixel, and marginalising over the CSiBORG realisations, a model for substructure within each halo and a set of templates that describes the contribution from non-dark matter sources, we constrain the parameters of dark matter interactions using all nearby dark matter that is resolved by CSiBORG.

Full-sky Mollweide projection in galactic coordinates of the ensemble mean J and D factors over the CSiBORG realisations. These are proportional to the gamma-ray flux produced by dark matter annihilation and decay respectively.

Full-sky Mollweide projection in galactic coordinates of the ensemble mean J and D factors over the CSiBORG realisations. These are proportional to the gamma-ray flux produced by dark matter annihilation and decay respectively.

Bounding DM interactions

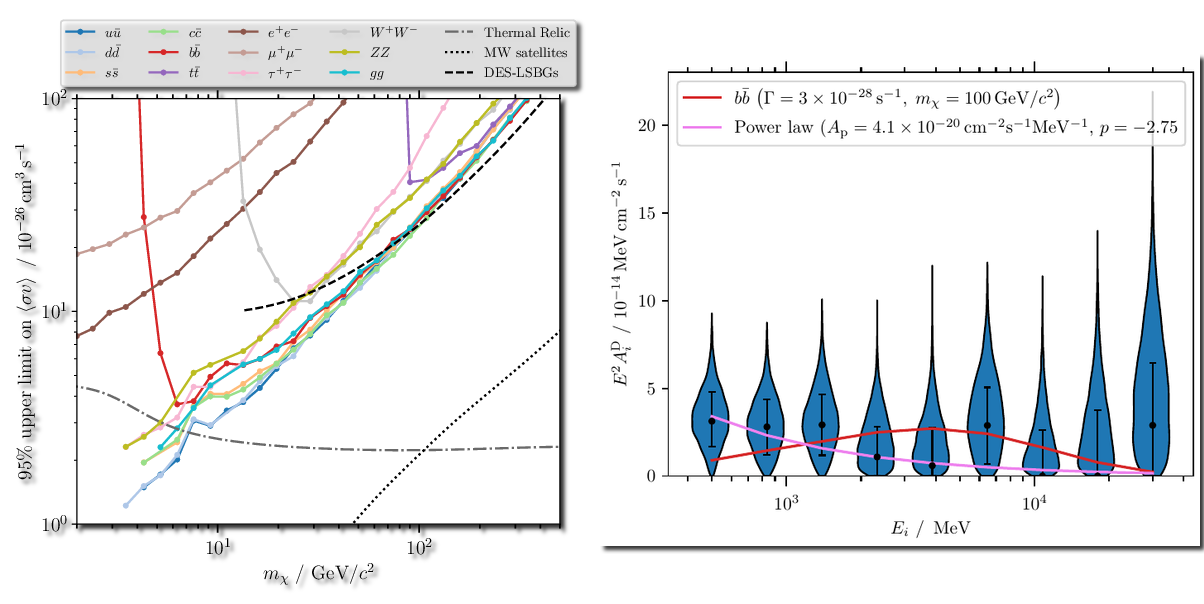

We find no evidence for enhanced gamma-ray flux tracing dark matter density squared, as would be expected in an annihilation model. This allows us to set constraints on the cross-section, which we show in Fig 3 (left) as a function of dark matter particle mass for a range of different annihilation channels. The grey dot-dashed line shows the thermal relic cross-section, which is the value needed to explain the current dark matter abundance through thermal freeze-out in the early universe (the standard production mechanism for WIMPs). Locations where bounds are below this indicate that the thermal relic scenario is ruled out. The black dashed line shows a previous constraint from cross-correlation of gamma-ray flux with the positions of low surface brightness galaxies, and the dotted line is from dSphs in the Local Group. While these constraints are much stronger than ours as dSphs are very close leading to large predicted flux (not included in our analysis because they are below the CSiBORG resolution limit), they are sensitive to flux contributions from baryonic processes. In our approach, significant constraining power comes from regions largely devoid of baryons such as the filaments that connect halos.

The right panel of Fig 3 shows our constraint on the flux due to dark matter decays, separately for each of Fermi’s energy bins. That many are centred away from zero indicates that we do detect gamma-rays with a flux distribution across the sky that traces the dark matter density, as expected for decays. However, the spectrum of the signal is much more closely aligned with a power-law than the expectation from decay (pink vs red line), suggesting a more mundane, baryonic origin such as blazars.

In conclusion, we have used BORG to open yet another field – dark matter indirect detection – to full-sky, field-level Bayesian inference. In principle this allows all the information to be extracted from gamma-ray surveys and thus represents the most promising astrophysical method for uncovering the non-gravitational interactions of dark matter.

Left: 2\(\sigma\) bounds on the dark matter annihilation cross-section for various different channels (coloured lines). The grey dot-dashed line is the cross-section of a thermal relic WIMP, and the black dashed and dotted lines show literature constraints from galaxy cross-correlations and Local Group dwarf spheroidals respectively. Right: Flux contribution with the spatial distribution expected from dark matter decay in each Fermi energy bin. The red line is the spectrum expected from decays to \(b\bar{b}\), while the pink line, preferred by the data, is the best-fit power-law spectrum.

Left: 2\(\sigma\) bounds on the dark matter annihilation cross-section for various different channels (coloured lines). The grey dot-dashed line is the cross-section of a thermal relic WIMP, and the black dashed and dotted lines show literature constraints from galaxy cross-correlations and Local Group dwarf spheroidals respectively. Right: Flux contribution with the spatial distribution expected from dark matter decay in each Fermi energy bin. The red line is the spectrum expected from decays to \(b\bar{b}\), while the pink line, preferred by the data, is the best-fit power-law spectrum.

-

D. J. Bartlett, A. Kostic, H. Desmond, J. Jasche & G. Lavaux, 2022, Constraints on dark matter annihilation and decay from the large-scale structure of the nearby universe, Phys Rev D submitted, arXiv:2205.12916 ![arxiv] ↩

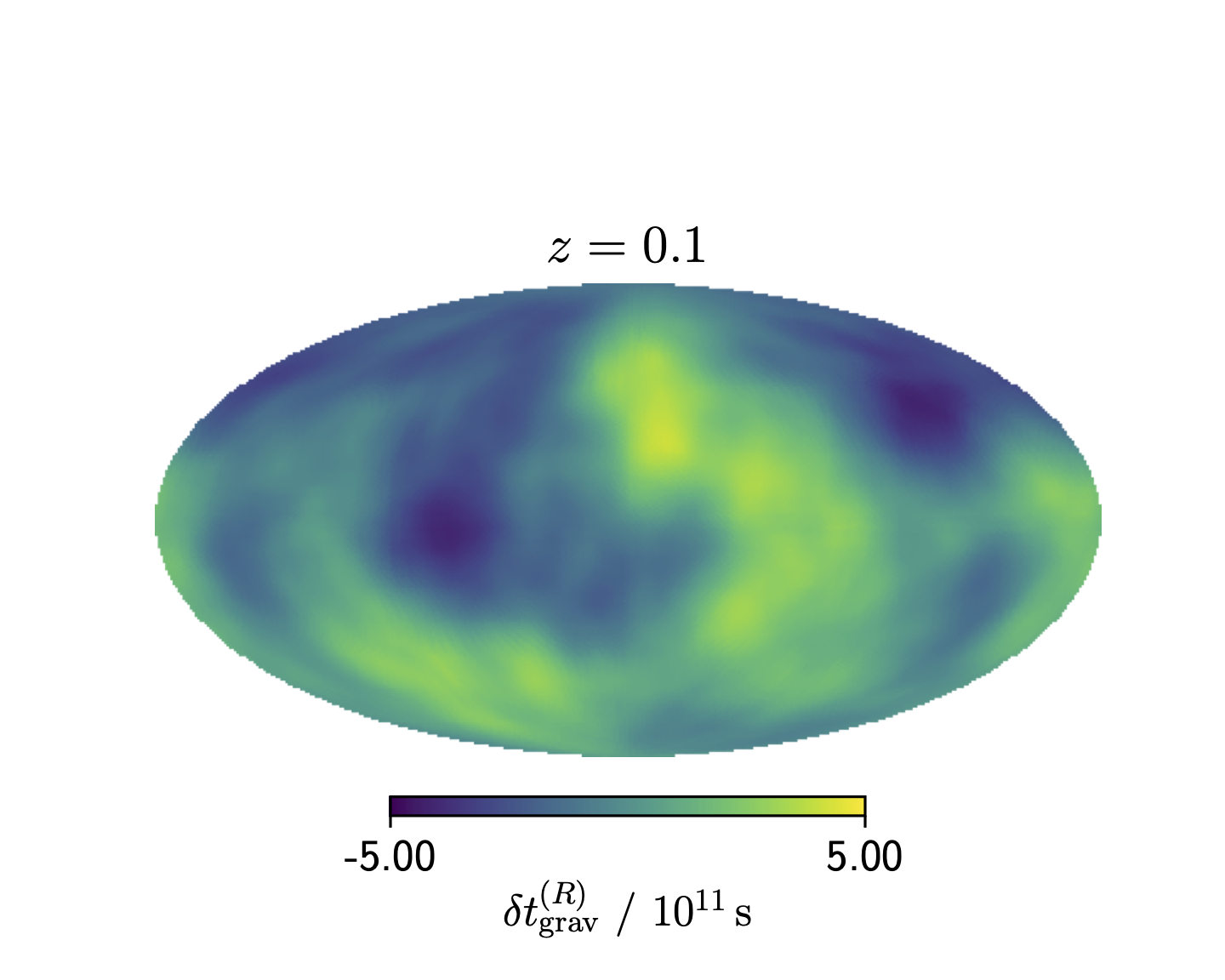

Mollweide projection in equatorial coordinates of the ensemble mean of the time delay fluctuations at \(z=0.1\) from wavelengths resolved by the BORG reconstruction.

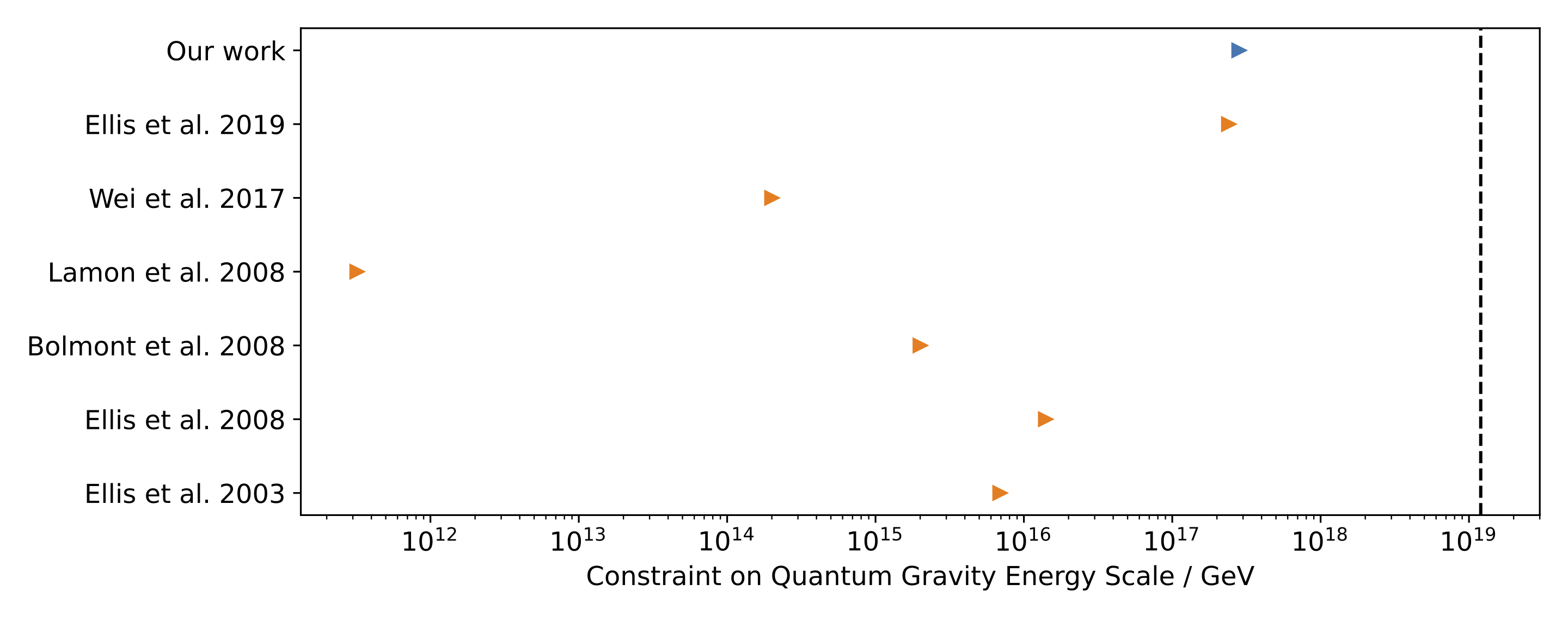

Mollweide projection in equatorial coordinates of the ensemble mean of the time delay fluctuations at \(z=0.1\) from wavelengths resolved by the BORG reconstruction. Lower limits on the quantum gravity energy scale (\(1 / \ell_{\rm QG}\)) from time delay studies which use multiple astrophysical sources. Our work provides the tightest constraint to date. The dashed vertical line is the Planck energy, and it is expected that the quantum gravity energy scale has approximately this value.

Lower limits on the quantum gravity energy scale (\(1 / \ell_{\rm QG}\)) from time delay studies which use multiple astrophysical sources. Our work provides the tightest constraint to date. The dashed vertical line is the Planck energy, and it is expected that the quantum gravity energy scale has approximately this value.